Reference: Pennycook, G., Bear, A., Collins, E. T., & Rand, D. G. (2020). The implied truth effect: Attaching warnings to a subset of fake news headlines increases perceived accuracy of headlines without warnings. Management Science.

Source: Pixabay

Throngs of people around the world are scrambling to get the COVID-19 vaccine. Yet many have no intention of doing so. Why? Fake news posts on social media spin lies about the vaccine. One outrageous claim is that the vaccine harbors a microchip that tracks patients’ personal information. Another myth is that the vaccine causes female infertility. A third warns that COVID-19 vaccine alters recipients’ DNA. These and other false claims threaten the public good by discouraging individuals from getting vaccinated, endangering their own health that of their communities.

Cognitive scientists are investigating practical strategies to mitigate belief in fake news and to halt its spread. Attaching warnings to news posts that fail the scrutiny of third-party fact checkers, a practice instituted by Facebook after the 2016 U.S. Presidential elections, is one such strategy under investigation. This practice is intuitively appealing–warnings about the truthfulness of online posts should plant seeds of doubt in readers’ minds. However, evidence for the efficacy of this approach is mixed. Researchers Gordon Pennycook, Adam Bear, Evan Collins, and David Rand attempted to tease apart some of the contradictory evidence regarding this practice.

Previous studies demonstrate that attaching warnings to fake news can reduce belief in misinformation, a phenomenon known as the warning effect (1, 2, 3). But the warning effect does not always hold. Warnings can backfire and increase belief in false content, particularly when the warnings contradict users’ values (4, 5, 6, 7). One explanation for such backfiring is that people use motivated reasoning to reject facts and arguments that threaten their identities (8).

Pennycook and his colleagues proposed an alternate model to explain why warning tags backfire. Their model, inspired by Bayesian probability, predicted the likelihood that participants would endorse or reject tagged and untagged headlines that matched or were incongruent with their political views. The model–called the implied truth effect — framed participants’ prior beliefs about the veracity of each headline as the existing evidence and participants’ beliefs that the headlines had been accurately vetted as the future events.

Motivated reasoning or implied truth?

To test their model, Pennycook and colleagues conducted two experiments that measured participants’ ratings of the accuracy of tagged and untagged true and false headlines whose veracity had been vetted by third-party fact-checkers. All headlines had a partisan slant, with an equal distribution of pro-Republican/anti-Democrat and pro-Democrat/anti-Republican content. Headlines were formatted to resemble Facebook posts, with an opening, or lede, sentence of a news article and a photograph. Participants were active social media users and an equal mix of self-reported Republicans and Democrats.

In the warning group, half of the false headlines that participants saw were tagged with warnings a red exclamation mark and the text: Disputed by 3rd Party Fact-Checkers (see figure below). The remaining false headlines seen in the warning group, and all true headlines, were left untagged, as were all headlines seem by participants in the control group.

Did warnings work? Yes. A greater percentage (17%) of untagged false headlines were rated as accurate by participants in the control group, compared to the percentage (14.5%) of tagged false headlines rated as accurate participants in the warning group. This result supported the warning effect. Furthermore, there was no evidence that warning tags backfired.

Was there evidence for the implied truth effect? Yes. A larger percentage (19%) of untagged false headlines were rated as accurate in the warning group, compared to the percentage (17%) of untagged false headlines rated as accurate in the control group. This result supports the implied truth effect–when false headlines come with warning tags, participants assume that untagged headlines are true.

Amplifying warnings and disambiguating truth

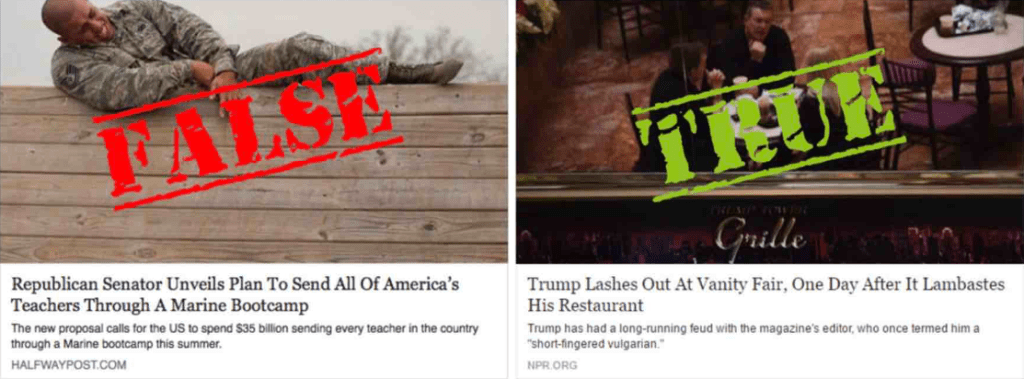

In the second study, Pennycook and colleagues ramped up the visibility of the warning tags and added a second warning group (warning + verification). Participants’ intentions to share headlines were the primary dependent variable in the second experiment. In the warning group, the tag morphed from inconspicuous text to large red font reading FALSE stamped across photos. In the added warning + verification group, the veracity of some true posts was made clear by large green font spelling TRUE stamped across photos (see figure below). In both warning groups, three-quarters of all headlines were tagged as true or false, leaving one-quarter of headlines untagged. The meaning of the warning was clarified to both groups with an explanation of the process of third-party fact checking. Control group procedures were identical to those in the first study.

Right: Tagged and stamped true headline, as shown to participants in the Warning + Verification treatment of Study 2.

Did the warning effect hold? Yes. Participants in warning group were willing to share fewer (16.1%) tagged false headlines than participants in the control group, who were willing to share 29.8% of untagged false headlines.

A similar warning effect was seen by comparing the warning + verification group and the control group.

Did the amplified warnings prompt a backfire effect? No. Participants who saw warnings that challenged their political views were willing to share a smaller percentage (14.7%) of false tagged headlines than control group participants who indicated they would share 26.0 % of untagged false headlines.

Did the implied truth effect hold? Yes, and there were interactions with headline veracity. For false headlines, participants in the warning condition were willing to share 36.2% of untagged headlines, compared to 29.8% in the control group. For true headlines, participants in the warning condition were willing to share 44.5% of untagged headlines, compared to 40.9% in the control group.

Did the truth stamp lead to a verification effect? Yes. Participants in the warning + verification group considered sharing a larger percentage (46%) of verified true headlines, compared to participants in the control group, who were willing to share 40.9% of unverified true headlines.

Implications for warnings and verifications

Pennycook and colleagues demonstrated a previously undocumented phenomenon, the implied truth effect: when false headlines come with warnings, users infer that untagged headlines are true and are more willing to believe and share them. The authors also found that removing the ambiguity surrounding untagged true reversed the implied truth effect. Neither study showed evidence for a backfire effect, whereby users reject political information that is inconsistent with their beliefs. These results call into question motivated reasoning accounts explaining the spread of false political information on social media sites. The results also suggest that social media platforms can impede the spread of misinformation by using third-party fact-checkers to warn against lies and endorse truth.

Additional References:

- Ecker, U.K.H, Lewandowsky S., Tang, D.T.W. (2010) Explicit warnings reduce but do not eliminate the continued influence of misinformation. Memory & Cognition 38(8):1087–1100.

- Lewandowsky, S., Ecker, U. K., Seifert, C. M., Schwarz, N., & Cook, J. (2012). Misinformation and its correction: Continued influence and successful debiasing. Psychological Science in the Public Interest, 13(3), 106-131.

- Chan, M. S., Jones, C. R., Hall Jamieson, K., & Albarracín, D. (2017). Debunking: A meta-analysis of the psychological efficacy of messages countering misinformation. Psychological Science, 28(11), 1531–1546.

- Nyhan, B. & Reifler. J. (2010) When corrections fail: The persistence of political misperceptions. Political Behavior, 32(2):303–330.

- Nyhan, B., Reifler. J. & Ubel , P.A. (2013). The hazards of correcting myths about healthcare reform. Medical Care 51(2):127–132.

- Schaffner, B.F. & Roche, C. (2016) Misinformation and motivated reasoning: Responses to economic news in a politicized environment. Public Opinion Quarterly, 81(1):86–110.

- Berinsky, A.J (2017). Rumors and healthcare reform: Experiments in political misinformation. British Journal of Political Science. 47(2):241–262.

- Kahan, D.M. (2017). Misconceptions, misinformation, and the logic of identity-protective cognition. Cultural Cognition Project Working Paper 164, Yale University, New Haven, CT.