Reference: Riels, K., Ramos Campagnoli, R., Thigpen, N., Keil, A. (2021). Oscillatory brain activity links experience to expectancy during associative learning. Psychophysiology e13946.

A vast majority of living organisms, and at this point many computer programs as well, make decisions based on their previous experiences. This may seem like an obvious and simple statement, but it is a fundamental aspect of survival and has left humanity with seemingly insurmountable problems and questions. For example, how do we know which avocado to buy at the store? Based on previous experience we may want to go with one with dark skin and a little (but not too much) give when you press on its skin. But how many encounters with avocados do we need to finally learn how to identify the ‘perfect’ avocado? This idea of how an association forms over time is especially relevant to fear and anxiety research. If we can better characterize what learning looks like, we may be able to better understand how someone comes to associate various situations with unpleasant feelings of fear and anxiety. Researchers Riels, Ramos Campagnoli, Thigpen, and Keil (2021) conducted a study to investigate how the brain forms simple associations over the course of an hour.

What did they do?

The researchers used EEG (electroencephalography) to measure electrical signals from the brain that make it to the scalp. One particular range of electrical signals is the “alpha band,” which typically range from 8-13 Hz in speed and are often associated with external sensory perception and the amount of internal and external attention someone pays to the world around them.

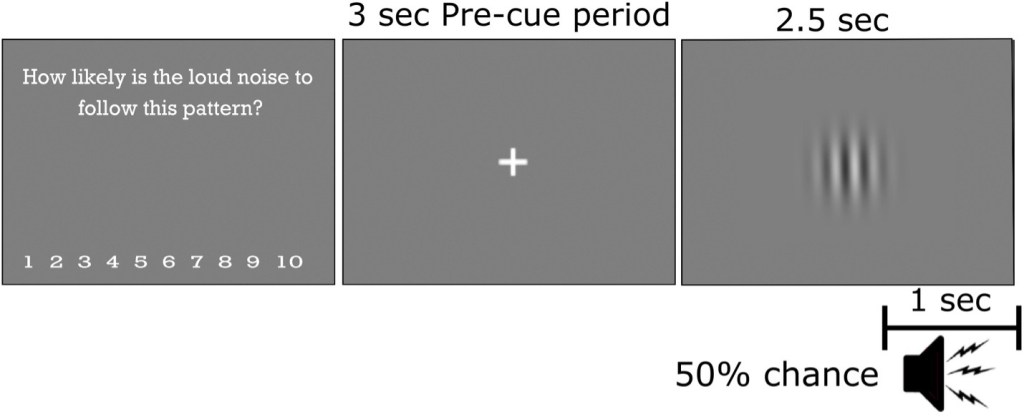

Participants stared at a white cross on a gray screen for 3 seconds, which was followed by a simple gray and white fuzzy striped circle called a Gabor patch. Unbeknownst to participants, there was a 50% chance this patch would be paired with a very loud and startling noise burst. Participants were asked to rate how likely they thought a loud noise burst would appear in the next trial. This procedure repeated for 120 trials.

The researchers hypothesized that brain data, specifically brain waves in the alpha frequency range, would be an indicator of the trial-by-trial changes in the strength of association between the gray and white patch and the loud noise burst. They also hypothesized that the pattern of these brain waves recorded from the scalp would predict the participants’ past experience of noise versus no noise and expectancy ratings.

Figure reprinted with permission.

What did they find?

The researchers found that people generally expected a loud noise to be present in the next trial if they just heard one in the previous trial, but after not hearing a noise, they lowered that expectation.

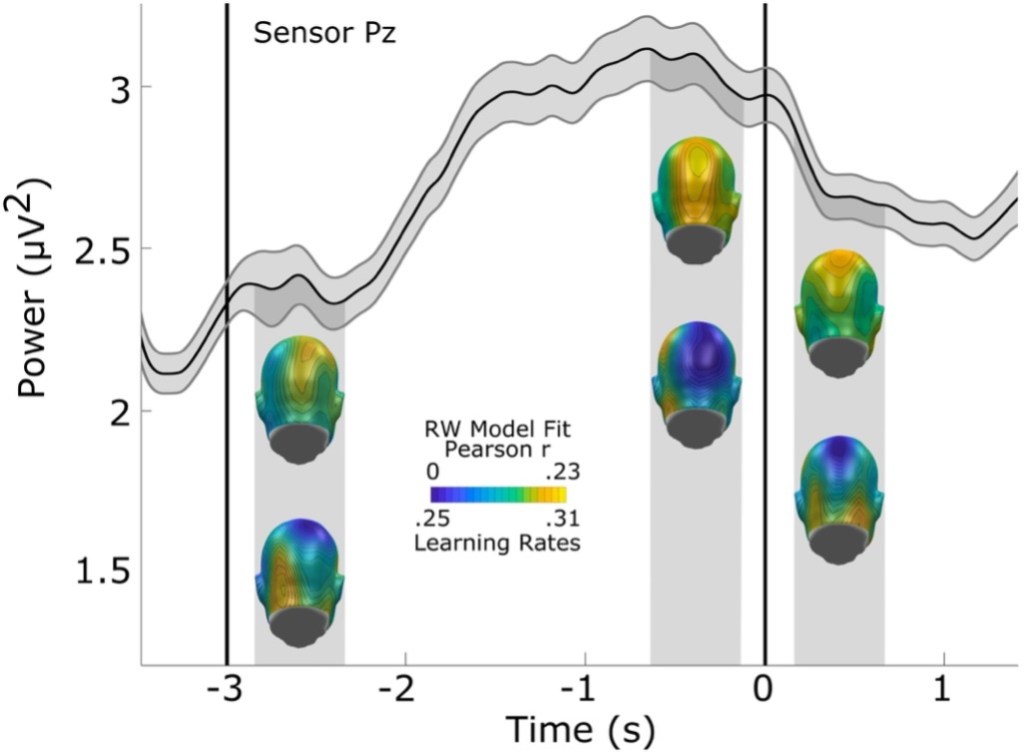

Figure reprinted with permission.

In the brain data, the alpha range brain waves increased in size in the “waiting” part of the trial leading up to when the gray and white patch was about to come on screen. When the patch came on, these brain waves decreased (this pattern is usually referred to as “blocking” and is often used as an indicator of increased external attention or an increase in sensory processing). In the figure below, the wavy line shows alpha power over the 3 seconds before the gray and white circle appeared and 1.5 seconds after it appeared (i.e., while the gray circle was on the screen, but before the loud noise) in an average trial. The blue and yellow heads show how well the researchers’ chosen model of association fit the actual brain data. The researchers chose an established theory of association formation and used that mathematical model to predict the brain data in each trial based on what the participants experienced. The top row of heads show that their model was most closely related to real data in the back of the head (i.e., where they saw the most alpha brain waves on the scalp). The bottom row of heads doesn’t show model accuracy, but rather more detailed information about the model – that is, how quickly someone updates their associations.

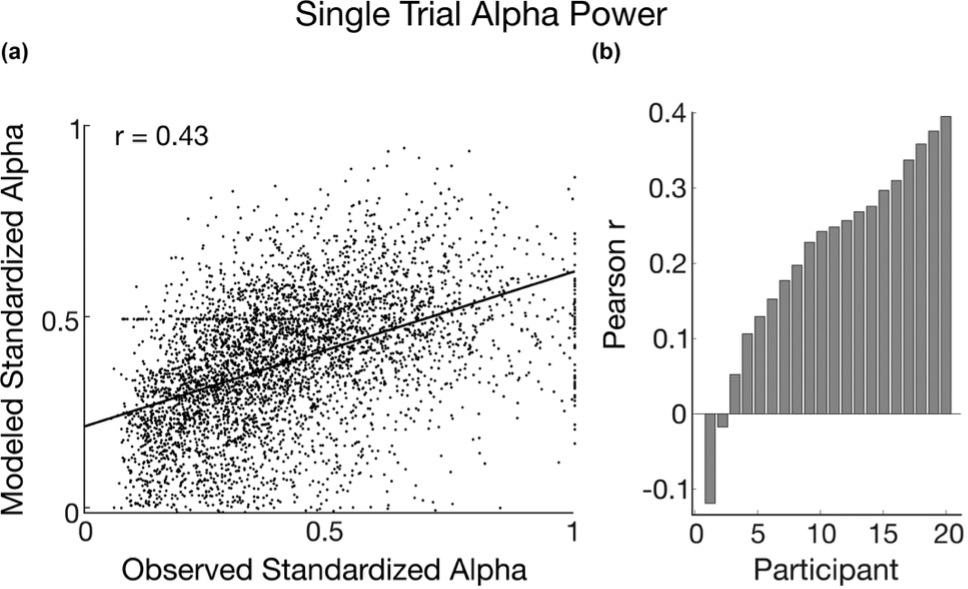

Figure reprinted with permission.

Across all possible data points, recorded alpha was positively associated with predicted associative strength. In 18 out of 20 participants, observed brain wave power changes followed the same patterns as predicted associative strength changes over time. As the predicted association between the grayscale patch and the loud noise increased, so did the alpha brain wave power. This means that as a participant learned that the visual patch predicted a loud noise, that person’s alpha waves increased in size. This relationship between alpha and learning is important because it demonstrates that these particular brain waves track or reflect this simple learning.

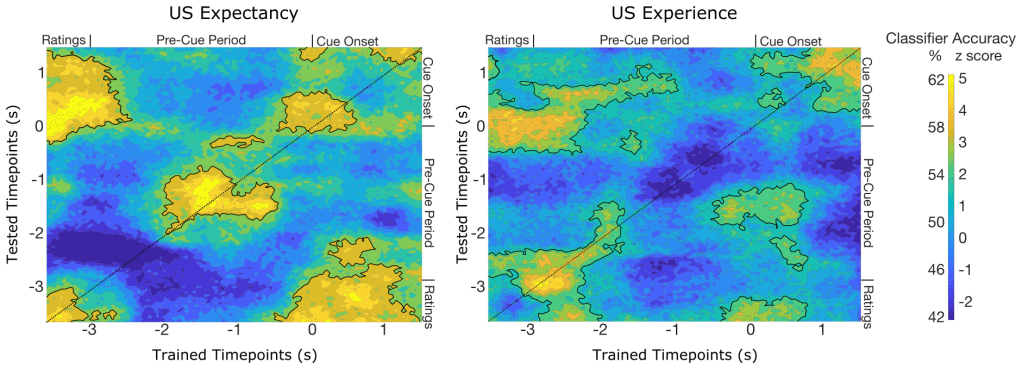

In a third approach to data analyses, the researchers used an artificial intelligence approach to look for spatial patterns in the alpha power over the time in a trial. They used a binary classifier which took the brain data at a single time point from 2 different types of trials – for example, a trial following a loud noise and a trial following no loud noise. They did this on a timepoint-by-timepoint basis. In other words, exactly 3 seconds before the grayscale image appeared, the researchers trained a classifier on that one millisecond (timepoint) from all the trials. This classifier took data from this one millisecond across a large subset of trials, learned which trials belonged to the “loud” versus “quiet” trials (i.e., if the brain data from that millisecond came after a loud noise or silent trial), and then the classifier was tested on trials that were left out of the training set. This process was repeated on all other milliseconds within the trial (i.e., training on a millisecond that occurred 3 seconds before the grayscale image then testing that classifier on the next millisecond), and so on until all time points were tested with the classifier trained on the original millisecond). This resulted in a kind of “heat map” that shows how well the trained classifier distinguished trial types at each millisecond in a trial.

In the figure below, yellow and green indicates when the classifier was able to use the brain data to distinguish the 2 trial types (i.e., trials followed by a loud noise or no loud noise). The map on the left distinguished participants’ expectancies of an upcoming loud noise – that is, if they indicated a high expectancy or a low expectancy of a loud noise. Outlined areas indicate that the classifier was able to reliably tell the 2 trial types apart from the brain data. The main times that distinguished conditions were the very beginning (when participants were actively rating), middle (while they were waiting), and end (when the grayscale circle returned) of each trial.

The map on the right distinguished participants’ experience of a loud noise – that is, whether or not they heard a loud noise previously. Outlined areas indicate that the classifier was able to reliably tell the 2 conditions apart from the brain data. The main times that distinguished conditions were the very beginning (i.e., just after the participants gave a rating and the waiting started), and end of each trial (i.e., when the grayscale circle returned and the potential loud noise was coming up).

What do these results mean?

The researchers concluded that brain data can be analyzed with great time specificity using objective computational methods. This conclusion is important because traditional average comparisons leave out a lot of information that may be relevant to explaining what parts of an experiment lead to the data that we see. The researchers hope to use these techniques in the future to discover how different types of data can be used to predict each other. For example, can other biological data, such as pupil size changes, be directly linked to brain data recorded from the scalp? How could this help researchers in understanding how people learn to associate two or more objects and outcomes together? Answering these questions will be important for understanding human learning.